Metric Evaluation of Reliability and Transparency of the Videos About Carpal Tunnel Syndrome Surgery in the Online Platforms: Assessment of YouTube Videos’ Content

Article information

Abstract

Objective

To evaluate the quality and reliability of carpal tunnel syndrome surgery videos on YouTube.

Methods

A keyword set of “carpal tunnel syndrome surgery” was searched on YouTube. The DISCERN scoring system, Journal of the American Medical Association (JAMA) scoring system, and Health on the Net (HON) ranking systems were used to evaluate the quality and reliability of the first 50 videos appeared in the search results. The characteristics of each video, such as the number of likes, dislikes and views, upload days, video length, and the uploader, were collected retrospectively. The relationships between the video quality and these factors were investigated statistically.

Results

All of the featured videos sorted were found to be of poor content (mean DISCERN score [n = 1.71 of 5], mean JAMA score [n = 1.76 of 4], mean HON score [n = 5.65 of 16]). Yet, DISCERN scores of the videos uploaded by medical centers were higher than that of the others (p = 0.022). No relationship was detected between the other variables and video quality.

Conclusion

Healthcare professionals and organizations should be more cautious when recording and uploading a video to the online platforms. As those videos could reach a wide audience, their content should provide more information about possible complications of a treatment and other treatment modalities.

INTRODUCTION

Today, YouTube is the largest online video hosting platform in the world, and it has been increasingly popular in gathering medical information [1]. Usually, patients and their relatives visit YouTube to search for readily available information about their illness and possible treatment methods [2]. They are used to watch online videos to get information before undergoing a planned operation and find out potential risks and complications. Even some healthcare professionals like surgery residents and junior surgeons are known to have been making use of this platform to improve their knowledge or learn new techniques in the field of surgery. As YouTube is easily accessible, patients too are used to watch online videos to get information before undergoing a planned operation and find out potential risks and complications. Thus, in evaluating the quality of the YouTube videos, our target audience is not only patients and their relatives but also surgery residents and junior surgeons.

On the other hand, given their function and role in educating both patients and surgeons, these videos should be examined regularly, and their reliability should be evaluated. There exist more than 1,500 studies in the literature that examine YouTube videos in medical content. Many studies suggest that the majority of those videos can be categorized as unreliable educational material. Although the reliability of the YouTube videos about medical issues has become a popular topic of interest in recent years, there is no study investigating the quality and reliability of the YouTube videos about carpal tunnel syndrome surgery (CTSS). Within this information, the purpose of this study is to evaluate quality of the videos about CTSS that are available on YouTube.

MATERIALS AND METHODS

In September 2020, a search was made on YouTube with the English keywords “carpal tunnel syndrome surgery.” No filters were applied. “Relevance-based ranking” was applied as the ranking criterion and the first 50 videos in the search results were selected, similar to the method of a previous study [3].

The following data were collected for each video: the time passed since upload of the video, the uploader, the number of views, likes, and dislikes. The uploaders were divided into 3 categories: (1) doctor, (2) medical center (institute, hospital, or clinic), (3) medical media agency. Those categories were determining as such: those including only a doctor’s name in the video title or information were put in the “doctor” category; the videos including name of a hospital, institute, or clinic were put in the “medical center” category, and lastly, the videos uploaded by agencies were put in the “medical media agency” category.

The videos were retrospectively reviewed by 3 independent senior clinicians (OO, FD, OB) using DISCERN, the Journal of the American Medical Association (JAMA), and Health on the Net (HON) ranking systems. Each video was scored separately and the mean score of each video was calculated.

DISCERN is a questionnaire designed to evaluate the quality and reliability of health information. The videos are labelled as “poor,” “moderate,” and “good” in terms of their quality and then scored on a scale of 15 questions with 5 items in each. Each question is scored out of 5 and the mean score in 15 questions is pointed out the video’s final score [4]. The first 8 questions focus on reliability of the information while the last 7 questions examine the treatment options offered (Table 1). DISCERN score is evaluated as “good” if it is higher than 3, “moderate” if it is 3, and “poor” if it is less than 3.

The JAMA evaluation criteria were used to evaluate video accuracy and reliability [5]. The JAMA comparison criterion is a nonspecific and objective assessment consisting of 4 different criteria (Table 2). Each criterion stands for 1 point. After the scores are calculated, a score of 4 indicates high accuracy and reliability of the source, while a score of 0 indicates poor accuracy and reliability. These criteria have been applied extensively in previous studies to evaluate the reliability of online resources [3].

The HON is an assessment method that aims to improve the quality of health information on the internet including YouTube and other online platforms [6]. HON examines transparency and accuracy of the online information. The HON score primarily includes the following ethical aspects: author credentials, date of latest modification of clinical documents, data confidentiality, source data references, funding, and advertising policy (Table 3). The HON score has a maximum score of 16: 5 points for accessibility and transparency of information including valid contact information; 5 points for referring to authors’ credentials; 3 points for accountability; 1 point for the privacy policy for user information; 1 point to reference when the information was last updated; and 1 point for accessibility [6,7]. A HON score of 12 or above out of 16 indicates that a YouTube video is fairly reliable [6,7].

The IBM SPSS Statistics ver. 25.0 (IBM Co., Armonk, NY, USA) is used for statistical analysis. The Kolmogorov-Smirnov test was used to examine the normal distribution. According to the results of normality analyses, the data was not normally distributed. The descriptive statistical methods (frequency, percentage, mean, standard deviation) were used to evaluate the demographic data. The Kruskal-Wallis test was used in the comparison of quantitative data of 3 groups. The Spearman correlation analysis was performed for analyzing the association of the quantitative data. The results were evaluated at a confidence interval of 95% and a significance level of p < 0.05.

RESULTS

Of the 50 videos analyzed, all scored less than 3 out of 5 according to the DISCERN score. The mean DISCERN score was 1.71 out of 5. The average JAMA score was 1.76 out of 4, with a range of 0–3. The average HON score was 5.65 out of 16, with a range of 1–11. None of the videos scored 12 points or above.

It was determined that 36% of the videos were produced and uploaded by doctors, 24% by the medical center, and 40% by the medical media agency. Although all of the videos were in the poor-quality group, a statistically significant difference was found between their scores and uploaders according to DISCERN (p = 0.022) (Table 4). The DISCERN score of videos made by health centers was higher than the others. There was no statistically significant difference between the uploaders of the videos and JAMA and HON (Table 4) (p = 0.160 and p = 0.114, respectively).

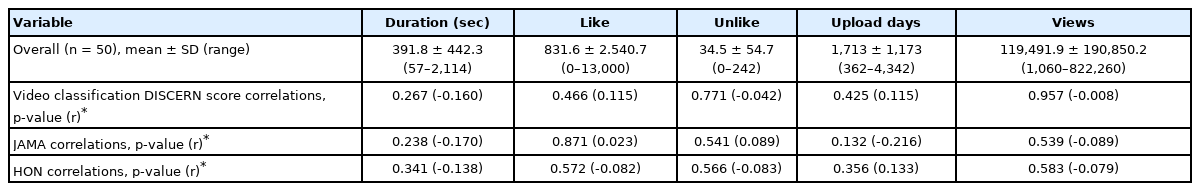

The videos examined were uploaded at varying dates between 2009–2019. The mean upload days was 1,713 ± 1,173 days, with a range of 362–4,342, the mean video length was 391.8 ± 442.3 seconds, with a range of 57–2,114, the total number of views of 50 videos was 5,974,598, and; the mean number of views was 119,491.9 ± 190,850.2, with a range of 1,060–822,260. While the mean number of likes was 831.6 ± 2,540.7, with a range of 0–13,000, the number of dislikes was 34.5 ± 54.7, with a range of 0–242, respectively. Consistent with the results of the other studies, those results show that the common criteria applied in ranking videos such as the time passed since the upload date, number of views, likes, or dislikes, and the length of a video indeed have no effect on the quality of a video (Table 5).

DISCUSSION

In the present study, videos about CTSS on YouTube, the leading online video sharing platform, were evaluated. The sampled videos on this subject were found to be unreliable and unchecked. There exist a sound literature examining the reliability of the YouTube videos watched by patients to gather medical information, including the subject of neurosurgery [3,8-13]. In those studies reliability of the videos posted on YouTube regarding the subjects like spinal surgery, brain tumors, intracranial aneurysms, and deep brain stimulation surgery was investigated [3,8-13]. Those studies generally suggest that the YouTube videos usually fall short of providing complete medical information.

To the best of our knowledge, there is no study evaluating the reliability and accuracy of the information in the CTSS-related videos on YouTube. In our study, we examined 50 videos on this topic that we sampled according to the method of “relevance-based ranking.” The first 50 videos that appeared in the keyword search results were selected because of 2 reasons: First, YouTube search engine seems to show the videos with highest number of views first. So, a sample of those videos could give a true picture of the impact of the videos on healthcare professionals and the general public. Second, any person seeking information about CTSS on YouTube should search the same or similar keywords to get the most relevant results. So, we think that our search criteria provided us with the accurate sample to evaluate the CTSS-related videos with highest impact and widest reach. The results showed that all videos had a DISCERN score below 3 (poor), HON scores below 12, and very low JAMA scores (1.76 of 4). The reliability of the videos containing preoperative medical information was also low. Only a small number of videos, for example, mentioned source of the information featured. Besides, it was not clear when the information discussed in the videos was produced. No details of the additional sources of information were disclosed either. Some videos discussing a treatment method of an illness mentioned alternative methods as well but their approaches to the other alternatives seemed neither well-balanced nor impartial. Potential risks or benefits of a discussed treatment method were not thoroughly described and compared with alternative therapies. The issue of how a proposed treatment method options would affect overall quality of life of a patient was neglected. Thus, the reliability of those videos was considered to be low. Nevertheless, overall, medical centers make relatively better quality and more reliable videos. It was observed that the videos featured by medical centers had higher DISCERN score, while there was no significant difference as far as JAMA and HON scores are concerned. No other factors were found to be significantly associated with a higher DISCERN score, JAMA score, and HON score.

The standard deviation values for video features like length, views, and dislikes were determined higher than their mean values. These results also suggested that the CTSS-related videos on YouTube have no standards, unreliable and unchecked by a professional.

In this regard, healthcare professionals should notice that there are thousands of readily available videos about diseases and their treatment methods on YouTube and many patients watch those videos. As it is impossible to edit those videos or undo their impact uploaders should at least be more sensitive and conscious about the impact their videos make especially on general public. In a health-related video aiming to make positive impact and contribute to the public health following categories of information should be discussed professionally: pathophysiology of the relevant disease, the natural course of the disease if untreated, treatment options, unbiased comparison of treatment options, potential complications of treatment options, possible complications related to anesthesia if used, clear mention of all sources of the information, and expected effects of the treatment on general quality of life.

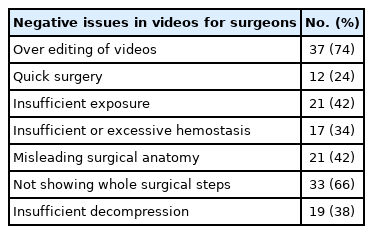

On the other hand, these videos are watched by residents and junior surgeons for their surgical development and training. Thus, missing or misleading information in these videos can lead to unrepairable consequences. It is possible that a video containing partial information about a surgery could be considered by junior surgeons as practical and time-saving thereby misleading and misinforming them. For example, while the decompression of the median nerve takes at least 10 minutes, a video that fast-forwards and shortens this duration to attract more viewers might make the healthcare professional think that indeed this operation could be competed in less than 10 minutes. However, it is highly likely that shortened videos may not cover all essential aspect of a surgery. In such cases, maintaining an operation with insufficient hemostasis, or rushing to finish an operation in shorter than ideal duration would equip the junior surgeons with at best incomplete and misleading information ultimately undercutting their training. The downside of those videos for surgery residents and junior surgeons are summarized in Table 6. Negative impacts of the problematic videos are shown in the range of 24%–74%. The problems in the medical videos may be neglected as long as their target audience is patients and general public. However, as they have also been used by residents and junior surgeons as training materials the problems should be addressed properly. So, there is need for further research to raise awareness about this problem and device ways to end healthcare professional’s exposure to misleading information. In Table 6, it was listed some basic issues to contribute to this discussion and there is room for new studies to further develop these topics.

It is a fact that that most of medical-related YouTube videos have been made and uploaded by some health professionals and medical centers for the purpose of advertising. In addition, there is a legitimate concern that most of the videos have been made public without obtaining consent for the patients’ surgical images or any other scenes involving them and ethical rules protecting privacy and personal information of the patients have not been respected duly. There is no information that the procedures performed in these videos comply with the ethical standards of relevant institutional and/or national research committees and but also the 1964 Declaration of Helsinki or any other comparable ethical standards. We believe that in the near future we may have new online open-source media forums under YouTube’s lead or within alternative platforms that commit to the Helsinki Declaration and comply with the ethical principles and international scientific publication standards.

It should also be noted that YouTube hosts numerous highquality medical resources and thereby could offer useful options in informing patients and the general public, training health professionals and last but not least providing a connection between professionals and patients. However, because of the shortcomings in videos and lack of an effective mechanism to separate fact from fiction, it seems that this is not possible for the time being [5]. As far as providing reliable medical information is concerned, YouTube is comparable to a dinner chat rather than an effective healthcare communication and decision-making platform.

CONCLUSION

YouTube provides patients and health professionals with an easy access to a large amount of information on CTSS. However, the poor quality and unreliability of the medical videos constitute one reason to be cautious. Health professionals should inform patients about the limitations of YouTube videos and refer them to appropriate sources of information to reduce their exposure to misinformation. Besides, health professionals too should avoid using online videos as training material for the same reason. Yet, given the global impact of online platforms such as YouTube, health professionals, and medical centers should make use of this opportunity to disseminate correct and easily digestible medical information for the general public. Such efforts would certainly contribute to protection of the public health in general and prevention of spread of misinformation in particular.

Notes

The authors have nothing to disclose.